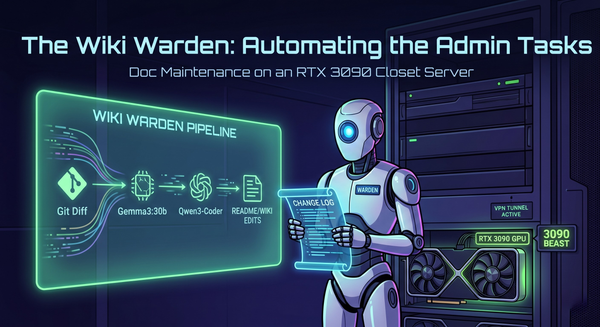

The Million-Row Scar: Why I’m Building a "DB-Lite" Analytics Engine

In the self-hosting world, we talk a lot about "owning your data." What we don’t talk about is the actual, crushing weight of that ownership.

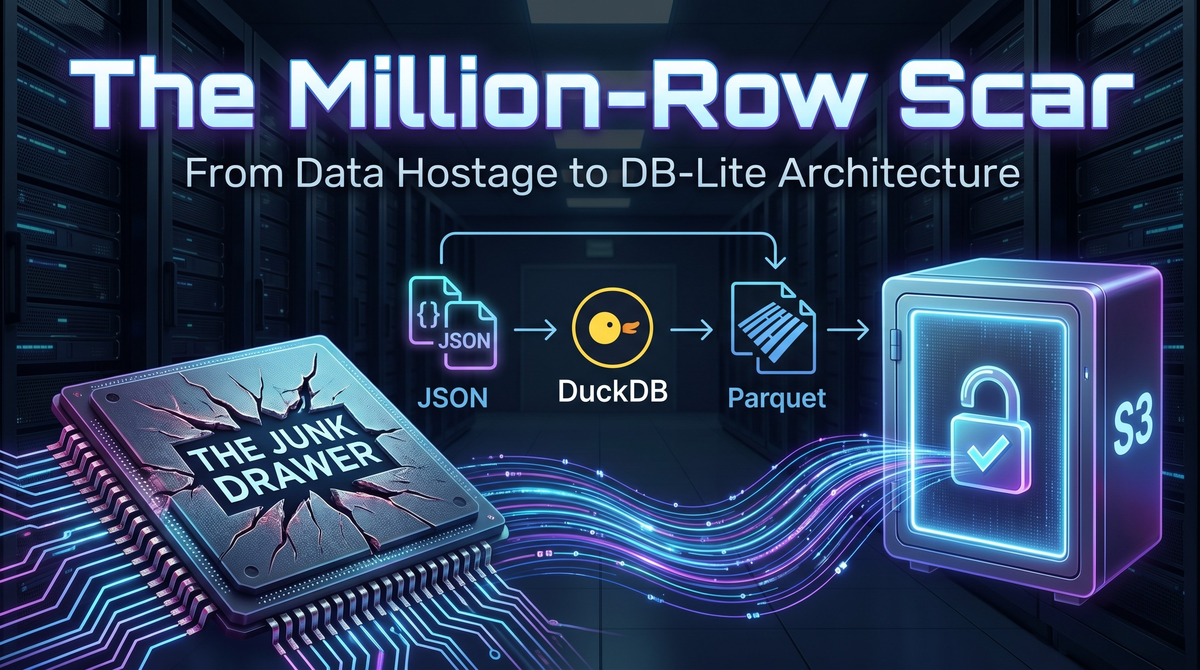

I have the scars to prove it. In previous projects, I’ve watched databases swell to 100+ million rows because I used them as a "junk drawer" for every click, log, and heartbeat. I’ve lived through the trauma of a database rebuild that took hours while the service sat dark and having the uptime monitor mock me. When your database gets that bloated, you start being a hostage to your own infrastructure.

While scaling The Hangout Spot past 100 users recently, I had a silent panic moment that reminded me why I need to break that cycle once and for all.

The Heart Attack Migration

While I was iterating on a new feature, a bad migration script slipped into my production pipeline. In a flash, my push_subscriptions table was wiped, which is the literal lifeblood of my app's notifications.

I spent the next few hours in a state of high-alert, surgically restoring that specific table from a 5-day-old backup. It worked, and my users were none the wiser, but it was a massive wake-up call. I was still leaning too hard on a single database for everything. If my DB was lean and focused only on critical user data, a restore would be a minor surgery. If it were bloated with millions of log rows, that same mistake would have been a fatal crash.

Flying Blind vs. Scaling Small

As a solo dev, I’m often flying blind. I need data to know if people are actually using the "Hype Board" or if the "Squad" feature is a confusing mess. That data guides where I spend my limited PTO hours coding. But I am not about to let analytics/logs kill my $15 VPS.

I needed a way to log feature usage without the database bloat. After some research and a tip from a friend, I found the answer: DuckDB.

The Architecture: Decoupling the Junk Drawer

Instead of hammering PostgreSQL with every click, I’m shipping a decoupled ELT (Extract, Load, Transform) pipeline that feels like enterprise engineering on a bootstrapped budget.

- Lightweight JSON Logs: The app writes events directly to local JSON files. This is basically zero overhead for the Node.js API and keeps the main database untouched and fast.

- DuckDB (The Transformer): I use DuckDB to process those files. It’s an in-process analytical database that is mind-blowingly fast at reading JSON and converting it into structured data.

- S3 & Parquet: DuckDB transforms the raw logs into Parquet files—the gold standard for big data—and ships them off to S3.

Why This is a Game Changer

By moving my logs to S3, my PostgreSQL database stays light. My backups are now tiny, my migrations are less risky, and my $15 VPS isn't wasting IOPS on table scans for old logs. Even my beta instance is getting a boost, pulling real-time data via bucket replication.

But more importantly, S3 opened a door I previously thought was closed. Once I figured out the logic for shipping data to S3, I realized I was no longer limited by my VPS storage. This clears the path for the features I’ve been dreaming of but was too afraid to host—like Photo and Video sharing. That hours-long recovery taught me to respect the database. DuckDB and S3 are how I’m finally moving past it.